Python rose as a serious competitor to C and C++ inheriting the object-oriented programming of C++ and unleashing the capacity to develop machine programming, desktop apps, web apps and mobile apps as well. Reflected by its punch-line “Batteries included”, Python allows to develop vast applications in a limited time period. This is made possible by the inclusion of required modules and methods in their platform. For this reason, it ranks the highest among software developers for Big Data apps. The humongous library of Python often poses the issue of missing out on in-built optimization functions. This could create performance and syncing issues later. Here are 5 common Python mistakes made by ddevelopers and a brief on avoiding them.

Types of Data: When working on Python, one could have access to large databases. This could result in improper determination of the data type in the database. Before executing a query in the application, the developer must go through each data and its type to avoid any future issues. Python provides an overview of Data Types in their developers’ manual with emphasis on special data types like fixed-type arrays, sets, dates and time, synchronized queues and heap queues. You can also use built-in data types, especially set, dict, tuple and list.

Reinventing the wheel? : A common phrase in developers’ vocabulary, reinventing the wheel refers to the act of duplicating a basic method which has been developed and optimized previously. Karolina Alexiou, a software developer considers the reinventing of wheel to be a mistake: Python is endowed with a strong functionality which has been heavily screened for efficiency; in cases like the need to load CSV files onto memory to use them, developers end up expending a large amount of time customizing the CSV-loading functions. This leaves the developers with dictionary of the dictionaries, only slowing them down in querying.

The preoccupation with customization of functions leaves them with almost-nil time and energy to derive meaningful insights from the available data. Karolina suggests that a few Google clicks and suggestions from an expert developer on data analysis library can resolve this issue without unnecessary expenditure of time. However, there are others (like developers under the virtual keyname of J.D. Isaacks and Dimitri C in the Programmers StackExchange forum) who point out that reinventing the wheel gives one complete control on the software and also creates opportunities for ingenious re-doings.

The ultimate decision to the question, “Should I reinvent the wheel?” has to be answered depending on the context of your work. It is helpful when: the aim is to learn and understand the complex working, the framework or library is dense and copious and you only need light functionality; and you have hit an original idea and believe that you can make a contribution that others’ implementations have not. However, reinventing the wheel is a bad idea when: the optimum level of functionality already is in place and stable, the version you have cooked up has nothing new to offer and is missing many features, your framework includes bugs or restrictions and has unit tests absent in comparison to the previous ones. Thus, if you are considering to reinvent the wheel, then remember that the context must determine if you need it or not.

Analysis of Data: Python is a language of scripting and developing and it is not advisable to use it for analysis of Big Data. Python can only be used for basic analysis and you must use a third-party framework for doing the heavy data lifting work. The two open-source brothers of the Big Data related tasks in the market are Spark and Hadoop. Bernard Marr, talking about the ideal Big Data framework in Forbes, points out that Speed overrides Hadoop due to its high speed and its capacity to deal with data in memory, that is, through transference of data from fragmented physical stored to swifter logical RAM memory.

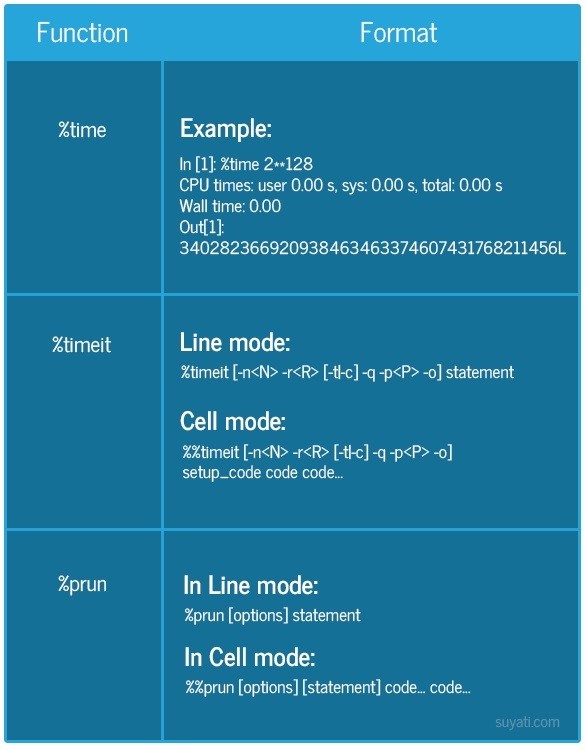

Orienting for Performance: The slow working of a program which does not produce quick output hinders the work flow of the developers. They can merely do about 30 rounds every day putting a boundary on the experiments, tweaking and executing which can be carried out. Many utility services are available to speed up the working of code. These are also available within IPython Interactive Shell. IPython has four magic functions for timing and profiling: %time and %timeit (check duration to run script), %prun (check duration to run each script-function), %lprun (check duration to run each line in the function) and %mprun and %memit (check the amount of memory used by script).

Format for using %time, %timeit and %prun

Handling time and time zones: While the developer can derive time using date-time parameter, it must be transitioned into the local time using the appropriate time zone. This can be done by executing the time stamp in the code. Beginners find epoch time a hard concept to digest. Epoch time is the identical number across the world at any given moment. This number has to be translated into hours and minutes of the day, according to the timezone and time of year. These conversions can be handled using datetime and pytz.

Datetime module:

This is used for working with dates and times, together and in separation. There are two kinds of date and time objects: naive and aware. While the aware ones have adequate knowledge of the applicable algorithm and time adaptations (like day-light saving time and time zone), the naive object does not contain such information and while it is easy to work with them, one could miss out some aspects of reality. You can find more information on using datetime module here.

Pytz module:

The Olson tz database is brought into Python using pytz module. This allows precise and across platforms calculation of time zone when using Python 2.4 and higher versions. An example of local time and date arithmetic is:

>>> from datetime import datetime, timedelta

![]()

>>> from pytz import timezone

>>> import pytz

>>> utc = pytz.utc

>>> utc.zone

‘UTC’

>>> eastern = timezone(‘US/Eastern‘)

![]()

![]()

>>> eastern.zone

‘US/Eastern’

>>> amsterdam = timezone(‘Europe/Amsterdam‘)

>>> fmt = ‘%Y-%m-%d %H:%M:%S %Z%z‘

In a nutshell, any Python developer can avoid some common mistakes by following these measures:

![]()

- The type of data being used is appropriate;

- Gauge the necessity of reinventing the wheel;

- Use a third-party utility for analysis of Big Data;

- Tuning the code to performance; and

- Grasping conversion of Epoch time and related modules.